Your team just deployed an AI agent to automate incident response. It needs to query your SIEM, update tickets, and potentially disable compromised accounts. The question you're facing: how do you grant it authority without creating a security nightmare?

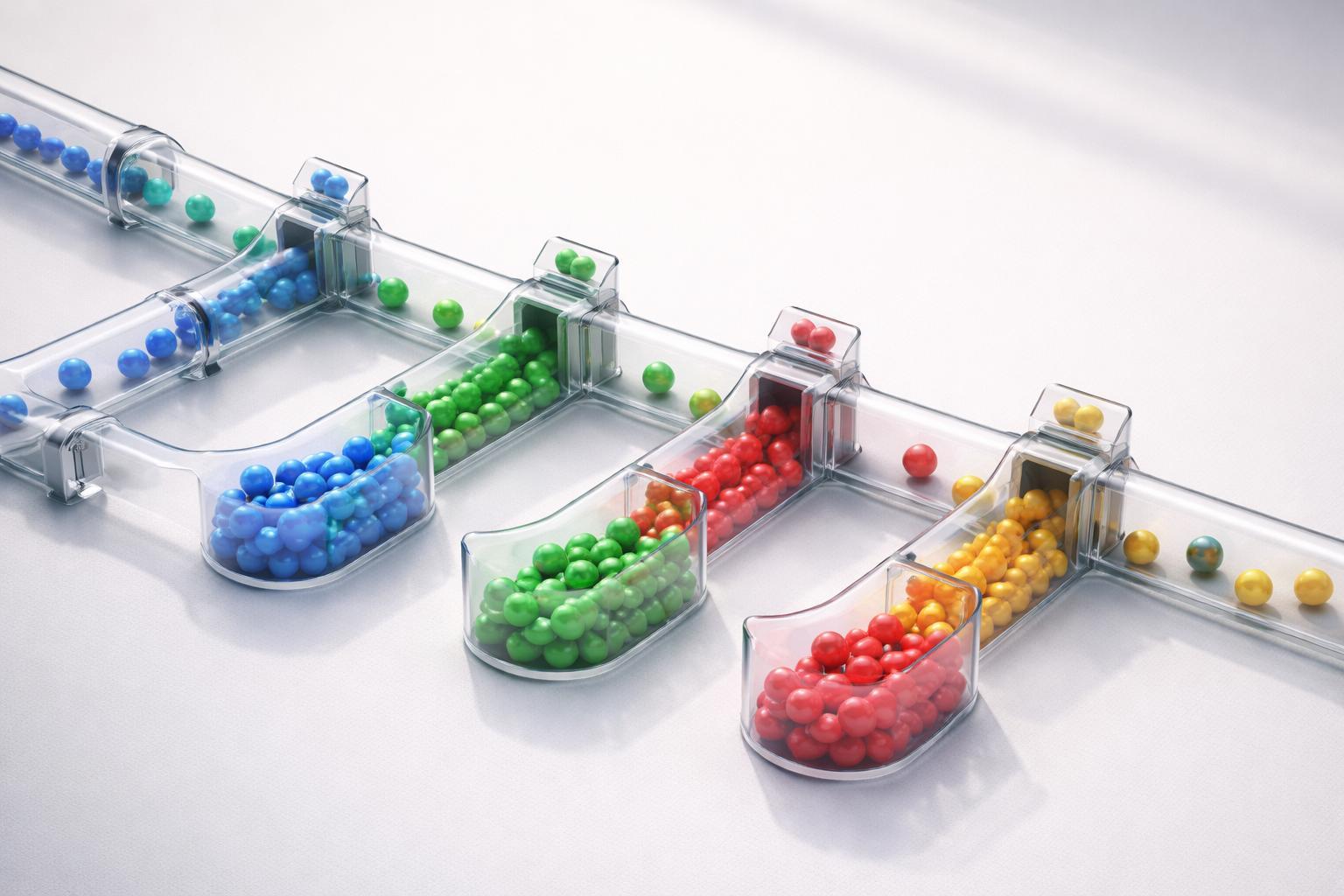

This isn't about whether to use AI agents—you're already using them or evaluating them. The decision is about how you model their authority. Do you treat them like service accounts with static permissions? Do you gate every action through human approval? Or do you build a delegation chain that dynamically validates authority based on who invoked the agent and what they're actually doing?

The Decision You're Facing

You need to choose between three governance models for AI agent authorization:

Static IAM: Grant the agent a fixed set of permissions, similar to a service account.

Approval-gated: Require human confirmation before the agent takes sensitive actions.

Delegation-based: Derive the agent's authority from the identity that invoked it, validated continuously.

Most teams default to static IAM because it's familiar. But AI agents are fundamentally different from traditional service accounts—they're triggered by existing enterprise identities and make autonomous decisions based on context. The authority model you choose determines whether you're securing the agent itself or the entire chain of delegation that empowers it.

Key Factors That Affect Your Choice

Frequency of agent invocation: If your agent runs hundreds of times daily across different contexts, static permissions create a broad attack surface. If it runs weekly in a controlled workflow, static IAM might suffice.

Variability of delegating identities: An agent invoked only by your security team has a narrow trust boundary. An agent accessible to developers, support staff, and automated systems inherits risk from every identity in that chain.

Blast radius of agent actions: Can your agent read audit logs, or can it modify production databases? The higher the privilege, the more critical it becomes to validate authority in real-time.

Identity dark matter in your environment: These are the orphaned accounts, over-provisioned service principals, and stale API keys that exist in every enterprise. AI agents triggered by these identities inherit their flawed authority. If you don't have continuous visibility into who actually has access to what, you can't trust the delegation chain.

Regulatory requirements: PCI DSS v4.0.1 Requirement 8.2.2 mandates unique authentication credentials for each user. SOC 2 Type II control CC6.1 requires logical access controls to be consistent with the classification of data. If your agent processes cardholder data or handles sensitive customer information, you need an audit trail showing not just what the agent did, but whose authority it was acting under.

Path A: Static IAM (When to Choose This)

Choose static IAM when:

- Your agent performs a single, well-defined function (e.g., daily backup validation).

- It's invoked by a controlled set of identities you actively monitor.

- Its permissions are read-only or limited to non-sensitive resources.

- You can afford to treat it like a service account with privileged access reviews every 90 days.

Implementation approach: Create a dedicated service principal with least-privilege permissions. Document which identities can invoke the agent. Implement logging per NIST 800-53 Rev 5 control AU-2 (audit event determination). Set calendar reminders for quarterly access reviews.

Where this breaks down: The moment your agent needs to take different actions based on context. If a developer invokes it to check test environment logs versus a security engineer using it to investigate production incidents, static permissions force you to either over-provision (security risk) or under-provision (operational friction).

Path B: Approval-Gated (When to Choose This)

Choose approval-gated when:

- Your agent performs high-risk actions infrequently (e.g., credential rotation, access revocation).

- You have sufficient staff to respond to approval requests within your SLA.

- Regulatory requirements demand human oversight for specific actions.

- You're in early adoption and want a safety net while you build confidence.

Implementation approach: Build a workflow where the agent proposes actions and waits for human confirmation. Log both the proposal and the approval decision. Implement timeout policies—if no one approves within a set time, the action fails safely.

Where this breaks down: Approval workflows don't scale. If your agent handles 50 requests per day, you've just created a full-time job. More critically, the human approver rarely has full context about the delegation chain. They see "Agent requests permission to disable account X" but not "Account X was flagged by an automated system that itself has over-provisioned access to sensitive data."

Path C: Delegation-Based with Continuous Observability (When to Choose This)

Choose delegation-based governance when:

- Your agents are invoked by multiple identities with varying privilege levels.

- Agent actions need to adapt based on who's delegating authority.

- You need to detect and respond to anomalous delegation patterns in real-time.

- You're managing identity dark matter and can't trust static permission models.

Implementation approach: This requires continuous observability of your identity baseline. You need to know, in real-time, which identities have what access, how they're actually using it, and whether that behavior matches their role.

AI agents are triggered, invoked, provisioned, or empowered by existing enterprise identities. The delegation-based model validates authority at invocation time: Does the identity invoking this agent actually have the privileges it claims? Has its behavior pattern changed in ways that suggest compromise? Is this request consistent with the agent's historical use by this identity?

Rather than asking "Should this agent be allowed to disable accounts?" you ask "Should this agent, invoked by this specific identity, at this time, based on this identity's verified behavior baseline, be allowed to disable accounts?"

This approach addresses identity dark matter directly. When you establish a verified baseline of real identity behavior through continuous observation, you can detect when an AI agent is being invoked by an identity whose actual access exceeds its legitimate need. You catch the orphaned service account with lingering admin rights before the agent it triggers causes damage.

Where this requires investment: You need infrastructure that continuously observes identity behavior and establishes dynamic trust boundaries. You can't implement this with quarterly access reviews and static RBAC policies. You need real-time visibility into the delegation chain.

Summary Matrix

| Factor | Static IAM | Approval-Gated | Delegation-Based |

|---|---|---|---|

| Setup complexity | Low | Medium | High |

| Operational overhead | Low | High | Medium |

| Scales with agent volume | No | No | Yes |

| Detects compromised delegators | No | No | Yes |

| Audit trail completeness | Agent actions only | Agent + approval | Full delegation chain |

| Addresses identity dark matter | No | No | Yes |

| Best for | Single-purpose, low-risk agents | Infrequent high-risk actions | Multi-context, production agents |

The right choice depends on where you are in AI agent adoption and how much identity dark matter exists in your environment. If you're running a pilot with one agent performing read-only analysis, start with static IAM. If you're scaling agents across teams and functions, you need the delegation-based model—because the alternative is treating every agent as a privileged service account and hoping your quarterly reviews catch problems before they cascade.

The agents themselves aren't the risk. The unverified delegation chains that empower them are.

AI agent governance